The Trouble with Information: How students gather and evaluate online resources.

Intro: Increasingly, university students are required to find and synthesize online resources to complete academic assignments. I’m interested in studying the process students use to complete these assignments…where do they start, what are their priorities, where do they go and how much time do they spend on the search process versus the composing process?

To answer these questions, I compared undergraduate and graduate student performance on a writing task that required them to gather information online and briefly respond to a writing prompt.

Drawing on Flowers and Hayes’ (1981) cognitive model of composition, I am studying the gather, evaluation, and integration processes involved in writing academic texts. I’m using an expert-novice comparison to get at differences in source use (Wineburg, 1991). To cast a broad net, I’m using a combination of established qualitative (Coiro, 2007) and quantitative (Azevedo & Cromley, 2004; Brand-Gruwel, et al., 2005; Holscher & Strube, 2000; Lazonder, 2000; Metzger, Flanagin, & Zwarun, 2003) models of studying online literacy practices. I started my study with two main questions:

1) how do experts and novices differ in their overall process when engaging in an online academic research task?

2) which, if any, of these practices predict the quality of the final product?

I define experts and novices by years spent in school. Ideally, I would like to compare faculty and/or researchers with undergraduates, but for this study, my experts are pre-service teachers (graduates) and my novices are first-week freshman (undergraduates).

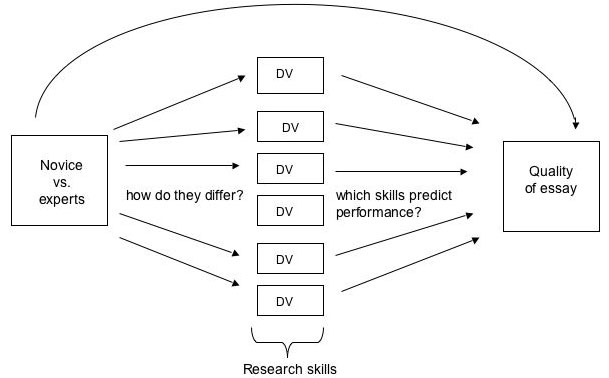

I use a mediational model that first examines how the two groups differ and then identifies predictors of high performance.

Method:

data collected during Fall quarter 2007

154 participants

65 experts (TEP students enrolled in Copeland’s “Teaching with Technology” course)

89 novices (first quarter freshmen enrolled in Writing 2 and 2E courses)

Procedure and materials:

Held in Phelps computer labs, each session lasted 70 minutes

Participants were first given pre-questionnaire that included questions about domain knowledge and interest, technical skills, and general demographic information. [show sample]

Participants were then given a prompt and told they had 50 minutes to write a 1-2 page response using information they found online. [show prompt]

During the gathering and composing phase, students were told when they had 30 and 10 minutes remaining.

After students submitted their work, they completed a post-questionnaire which included questions about their process (which sites they used, how they evaluate credibility) as well as follow-up questions about domain knowledge and interest. [show example]

Once students left, log files were collected from each computer. [show example] Log files included information about computer actions: how many sites they visited, how many times they revised their search term, how many times they returned to a site, how many links they followed within sites, and keystrokes (e.g., text entries and copy/paste).

Analysis:

Developed rubric to score student written responses. [show rubric] The main challenge was figuring out how to measure use of source materials, specifically, how to measure critical engagement with these materials. Used a combination of counting (quantitative) and holistic (qualitative) scoring.

Findings in progress

I am in the process of analyzing my data. What do you think will be a difference in the way experts and novices begin their search? My hope was that experts would use a database or at least Google Scholar or Eric Digests to begin their search. My preliminary results show that 82% of the expert participants and 72% of the novices started with Google. Less than five participants in each group started with Wikipedia, Dogpile, or ask. One expert started with Eric and one novice started with Google Scholar.

Furthermore, as I start the paper scoring, I’m finding that a majority of participants in both groups used the top five Google results for their first or second search terms. At first, this finding was a bit disheartening, but then I found that the preliminary differences seem to lie in how each group uses the source. For example, experts tend to consider the implications of the source material rather than simply inserting it into their texts.

Participants in both groups use personal experience and observation, but differ in the ways they use it — a few of the experts evaluate their experience in terms of other source materials, while most of the novices use personal experience/observation to make unsupported generalizations.

Future directions:

–Complete data analysis

–Conduct textual analysis using Pairwise to quantify degree of re-mixing (Jenkins, 2007) occurring in student academic texts (compare student texts with web pages they visited).

–Study usage patterns in non-academic settings: task-specific and recreational browsing among different age groups.

Cracking good research project. I look forward to the full results.

~Shaun